Repository Summary

| Description | LightNet-TRT is a high-efficiency and real-time implementation of convolutional neural networks (CNNs) using Edge AI. |

| Checkout URI | https://github.com/daniel89710/lightnet-trt.git |

| VCS Type | git |

| VCS Version | main |

| Last Updated | 2023-10-03 |

| Dev Status | UNKNOWN |

| Released | UNRELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| lightnet_trt | 0.0.0 |

README

LightNet-TRT: High-Efficiency and Real-Time CNN Implementation on Edge AI

LightNet-TRT is a CNN implementation optimized for edge AI devices that combines the advantages of LightNet [1] and TensorRT [2]. LightNet is a lightweight and high-performance neural network framework designed for edge devices, while TensorRT is a high-performance deep learning inference engine developed by NVIDIA for optimizing and running deep learning models on GPUs. LightNet-TRT uses the Network Definition API provided by TensorRT to integrate LightNet into TensorRT, allowing it to run efficiently and in real-time on edge devices.

Key Improvements

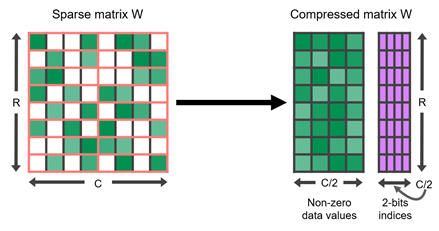

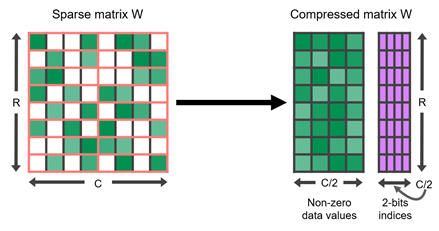

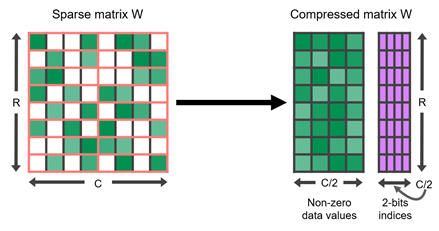

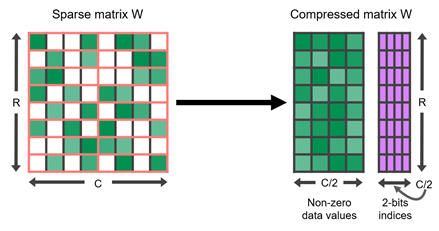

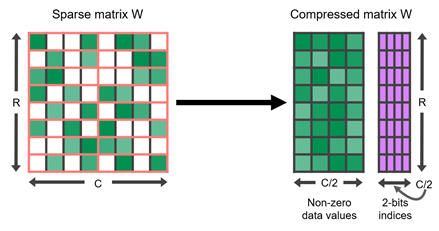

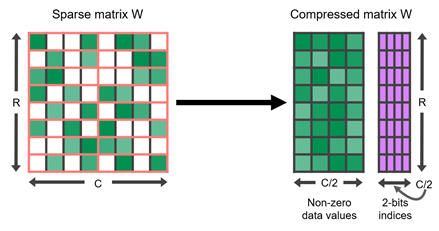

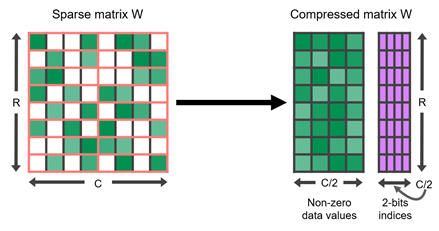

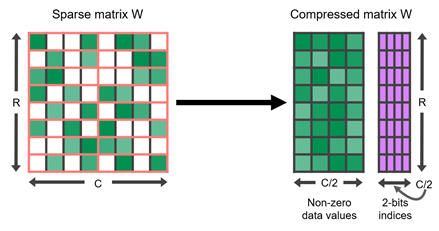

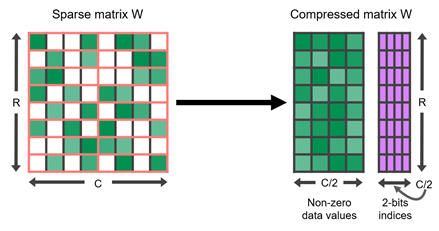

2:4 Structured Sparsity

LightNet-TRT utilizes 2:4 structured sparsity [3] to further optimize the network. 2:4 structured sparsity means that two values must be zero in each contiguous block of four values, resulting in a 50% reduction in the number of weights. This technique allows the network to use fewer weights and computations while maintaining accuracy.

NVDLA Execution

LightNet-TRT also supports the execution of the neural network on the NVIDIA Deep Learning Accelerator (NVDLA) [4] , a free and open architecture that provides high performance and low power consumption for deep learning inference on edge devices. By using NVDLA, LightNet-TRT can further improve the efficiency and performance of the network on edge devices.

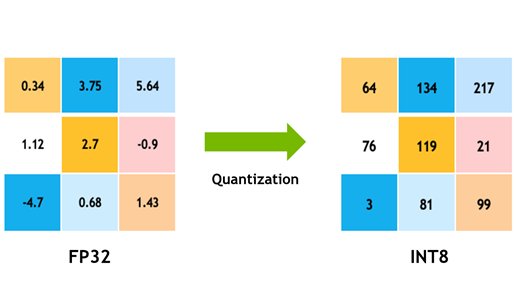

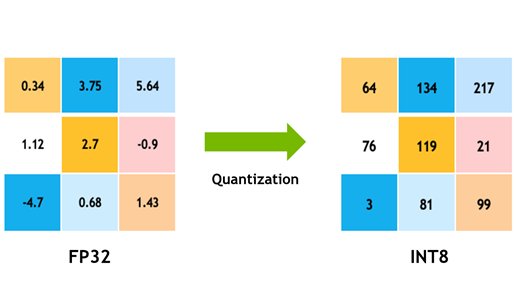

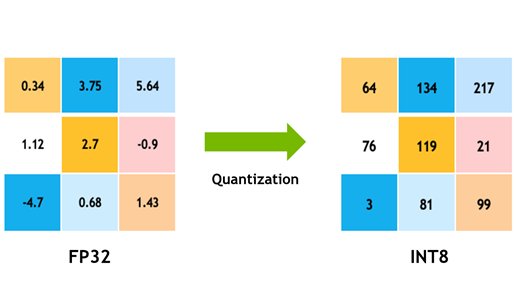

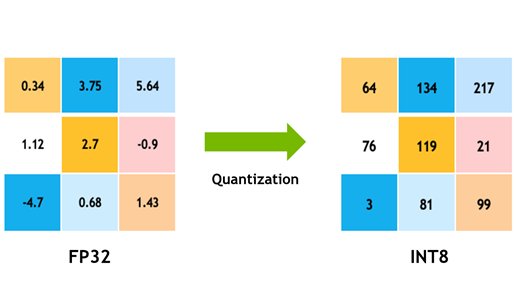

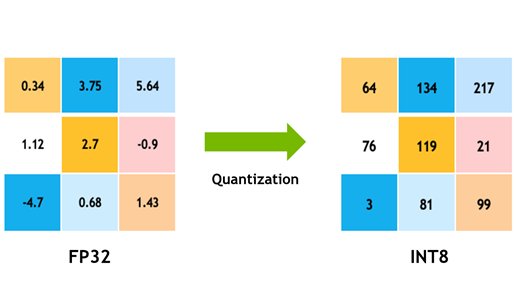

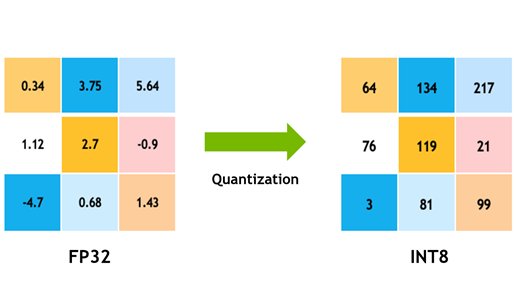

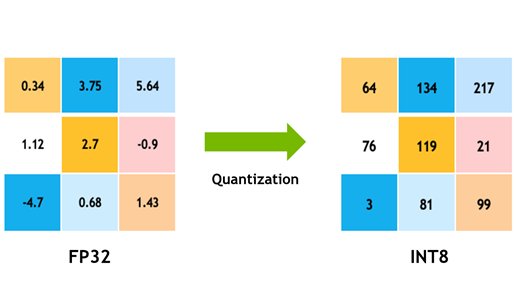

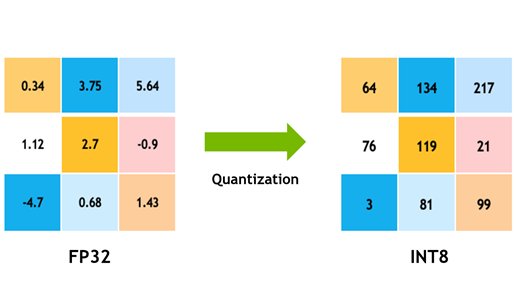

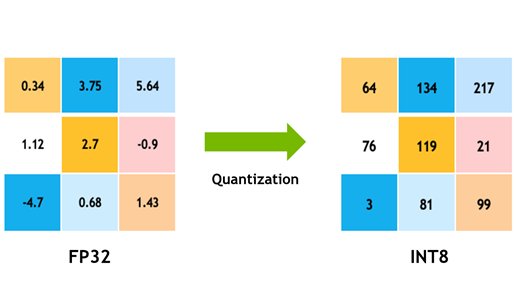

Multi-Precision Quantization

In addition to post training quantization [5], LightNet-TRT also supports multi-precision quantization, which allows the network to use different precision for weights and activations. By using mixed precision, LightNet-TRT can further reduce the memory usage and computational requirements of the network while still maintaining accuracy. By writing it in CFG, you can set the precision for each layer of your CNN.

Multitask Execution (Detection/Segmentation)

LightNet-TRT also supports multitask execution, allowing the network to perform both object detection and segmentation tasks simultaneously. This enables the network to perform multiple tasks efficiently on edge devices, saving computational resources and power.

Installation

Requirements

- CUDA 11.0 or higher

- TensorRT 8.0 or higher

- OpenCV 3.0 or higher

Steps

- Clone the repository.

$ git clone https://github.com/daniel89710/lightNet-TRT.git

$ cd lightNet-TRT

- Install libraries.

$ sudo apt update

$ sudo apt install libgflags-dev

$ sudo apt install libboost-all-dev

- Compile the TensorRT implementation.

$ mkdir build

$ cmake ../

$ make -j

Model

| Model | Resolutions | GFLOPS | Params | Precision | Sparsity | DNN time on RTX3080 | DNN time on Jetson Orin NX 16GB GPU | DNN time on Jetson Orin NX 16GB DLA| DNN time on Jetson Orin Nano 4GB GPU | cfg | weights | |—|—|—|—|—|—|—|—|—|—|—|—| | lightNet | 1280x960 | 58.01 | 9.0M | int8 | 49.8% | 1.30ms | 7.6ms | 14.2ms | 14.9ms | github |GoogleDrive | | LightNet+Semseg | 1280x960 | 76.61 | 9.7M | int8 | 49.8% | 2.06ms | 15.3ms | 23.2ms | 26.2ms | github | GoogleDrive| | lightNet pruning | 1280x960 | 35.03 | 4.3M | int8 | 49.8% | 1.21ms | 8.89ms | 11.68ms | 13.75ms | github |GoogleDrive | | LightNet pruning +SemsegLight | 1280x960 | 44.35 | 4.9M | int8 | 49.8% | 1.80ms | 9.89ms | 15.26ms | 23.35ms | github | GoogleDrive|

- “DNN time” refers to the time measured by IProfiler during the enqueueV2 operation, excluding pre-process and post-process times.

- Orin NX has three independent AI processors, allowing lightNet to be parallelized across a GPU and two DLAs.

- Orin Nano 4GB has only iGPU with 512 CUDA cores.

Usage

Converting a LightNet model to a TensorRT engine

Build FP32 engine

$ ./lightNet-TRT --flagfile ../configs/lightNet-BDD100K-det-semaseg-1280x960.txt --precision kFLOAT

Build FP16(HALF) engine

$ ./lightNet-TRT --flagfile ../configs/lightNet-BDD100K-det-semaseg-1280x960.txt --precision kHALF

Build INT8 engine (You need to prepare a list for calibration in “configs/calibration_images.txt”.)

$ ./lightNet-TRT --flagfile ../configs/lightNet-BDD100K-det-semaseg-1280x960.txt --precision kINT8

File truncated at 100 lines see the full file

CONTRIBUTING

Repository Summary

| Description | LightNet-TRT is a high-efficiency and real-time implementation of convolutional neural networks (CNNs) using Edge AI. |

| Checkout URI | https://github.com/daniel89710/lightnet-trt.git |

| VCS Type | git |

| VCS Version | main |

| Last Updated | 2023-10-03 |

| Dev Status | UNKNOWN |

| Released | UNRELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| lightnet_trt | 0.0.0 |

README

LightNet-TRT: High-Efficiency and Real-Time CNN Implementation on Edge AI

LightNet-TRT is a CNN implementation optimized for edge AI devices that combines the advantages of LightNet [1] and TensorRT [2]. LightNet is a lightweight and high-performance neural network framework designed for edge devices, while TensorRT is a high-performance deep learning inference engine developed by NVIDIA for optimizing and running deep learning models on GPUs. LightNet-TRT uses the Network Definition API provided by TensorRT to integrate LightNet into TensorRT, allowing it to run efficiently and in real-time on edge devices.

Key Improvements

2:4 Structured Sparsity

LightNet-TRT utilizes 2:4 structured sparsity [3] to further optimize the network. 2:4 structured sparsity means that two values must be zero in each contiguous block of four values, resulting in a 50% reduction in the number of weights. This technique allows the network to use fewer weights and computations while maintaining accuracy.

NVDLA Execution

LightNet-TRT also supports the execution of the neural network on the NVIDIA Deep Learning Accelerator (NVDLA) [4] , a free and open architecture that provides high performance and low power consumption for deep learning inference on edge devices. By using NVDLA, LightNet-TRT can further improve the efficiency and performance of the network on edge devices.

Multi-Precision Quantization

In addition to post training quantization [5], LightNet-TRT also supports multi-precision quantization, which allows the network to use different precision for weights and activations. By using mixed precision, LightNet-TRT can further reduce the memory usage and computational requirements of the network while still maintaining accuracy. By writing it in CFG, you can set the precision for each layer of your CNN.

Multitask Execution (Detection/Segmentation)

LightNet-TRT also supports multitask execution, allowing the network to perform both object detection and segmentation tasks simultaneously. This enables the network to perform multiple tasks efficiently on edge devices, saving computational resources and power.

Installation

Requirements

- CUDA 11.0 or higher

- TensorRT 8.0 or higher

- OpenCV 3.0 or higher

Steps

- Clone the repository.

$ git clone https://github.com/daniel89710/lightNet-TRT.git

$ cd lightNet-TRT

- Install libraries.

$ sudo apt update

$ sudo apt install libgflags-dev

$ sudo apt install libboost-all-dev

- Compile the TensorRT implementation.

$ mkdir build

$ cmake ../

$ make -j

Model

| Model | Resolutions | GFLOPS | Params | Precision | Sparsity | DNN time on RTX3080 | DNN time on Jetson Orin NX 16GB GPU | DNN time on Jetson Orin NX 16GB DLA| DNN time on Jetson Orin Nano 4GB GPU | cfg | weights | |—|—|—|—|—|—|—|—|—|—|—|—| | lightNet | 1280x960 | 58.01 | 9.0M | int8 | 49.8% | 1.30ms | 7.6ms | 14.2ms | 14.9ms | github |GoogleDrive | | LightNet+Semseg | 1280x960 | 76.61 | 9.7M | int8 | 49.8% | 2.06ms | 15.3ms | 23.2ms | 26.2ms | github | GoogleDrive| | lightNet pruning | 1280x960 | 35.03 | 4.3M | int8 | 49.8% | 1.21ms | 8.89ms | 11.68ms | 13.75ms | github |GoogleDrive | | LightNet pruning +SemsegLight | 1280x960 | 44.35 | 4.9M | int8 | 49.8% | 1.80ms | 9.89ms | 15.26ms | 23.35ms | github | GoogleDrive|

- “DNN time” refers to the time measured by IProfiler during the enqueueV2 operation, excluding pre-process and post-process times.

- Orin NX has three independent AI processors, allowing lightNet to be parallelized across a GPU and two DLAs.

- Orin Nano 4GB has only iGPU with 512 CUDA cores.

Usage

Converting a LightNet model to a TensorRT engine

Build FP32 engine

$ ./lightNet-TRT --flagfile ../configs/lightNet-BDD100K-det-semaseg-1280x960.txt --precision kFLOAT

Build FP16(HALF) engine

$ ./lightNet-TRT --flagfile ../configs/lightNet-BDD100K-det-semaseg-1280x960.txt --precision kHALF

Build INT8 engine (You need to prepare a list for calibration in “configs/calibration_images.txt”.)

$ ./lightNet-TRT --flagfile ../configs/lightNet-BDD100K-det-semaseg-1280x960.txt --precision kINT8

File truncated at 100 lines see the full file

CONTRIBUTING

Repository Summary

| Description | LightNet-TRT is a high-efficiency and real-time implementation of convolutional neural networks (CNNs) using Edge AI. |

| Checkout URI | https://github.com/daniel89710/lightnet-trt.git |

| VCS Type | git |

| VCS Version | main |

| Last Updated | 2023-10-03 |

| Dev Status | UNKNOWN |

| Released | UNRELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| lightnet_trt | 0.0.0 |

README

LightNet-TRT: High-Efficiency and Real-Time CNN Implementation on Edge AI

LightNet-TRT is a CNN implementation optimized for edge AI devices that combines the advantages of LightNet [1] and TensorRT [2]. LightNet is a lightweight and high-performance neural network framework designed for edge devices, while TensorRT is a high-performance deep learning inference engine developed by NVIDIA for optimizing and running deep learning models on GPUs. LightNet-TRT uses the Network Definition API provided by TensorRT to integrate LightNet into TensorRT, allowing it to run efficiently and in real-time on edge devices.

Key Improvements

2:4 Structured Sparsity

LightNet-TRT utilizes 2:4 structured sparsity [3] to further optimize the network. 2:4 structured sparsity means that two values must be zero in each contiguous block of four values, resulting in a 50% reduction in the number of weights. This technique allows the network to use fewer weights and computations while maintaining accuracy.

NVDLA Execution

LightNet-TRT also supports the execution of the neural network on the NVIDIA Deep Learning Accelerator (NVDLA) [4] , a free and open architecture that provides high performance and low power consumption for deep learning inference on edge devices. By using NVDLA, LightNet-TRT can further improve the efficiency and performance of the network on edge devices.

Multi-Precision Quantization

In addition to post training quantization [5], LightNet-TRT also supports multi-precision quantization, which allows the network to use different precision for weights and activations. By using mixed precision, LightNet-TRT can further reduce the memory usage and computational requirements of the network while still maintaining accuracy. By writing it in CFG, you can set the precision for each layer of your CNN.

Multitask Execution (Detection/Segmentation)

LightNet-TRT also supports multitask execution, allowing the network to perform both object detection and segmentation tasks simultaneously. This enables the network to perform multiple tasks efficiently on edge devices, saving computational resources and power.

Installation

Requirements

- CUDA 11.0 or higher

- TensorRT 8.0 or higher

- OpenCV 3.0 or higher

Steps

- Clone the repository.

$ git clone https://github.com/daniel89710/lightNet-TRT.git

$ cd lightNet-TRT

- Install libraries.

$ sudo apt update

$ sudo apt install libgflags-dev

$ sudo apt install libboost-all-dev

- Compile the TensorRT implementation.

$ mkdir build

$ cmake ../

$ make -j

Model

| Model | Resolutions | GFLOPS | Params | Precision | Sparsity | DNN time on RTX3080 | DNN time on Jetson Orin NX 16GB GPU | DNN time on Jetson Orin NX 16GB DLA| DNN time on Jetson Orin Nano 4GB GPU | cfg | weights | |—|—|—|—|—|—|—|—|—|—|—|—| | lightNet | 1280x960 | 58.01 | 9.0M | int8 | 49.8% | 1.30ms | 7.6ms | 14.2ms | 14.9ms | github |GoogleDrive | | LightNet+Semseg | 1280x960 | 76.61 | 9.7M | int8 | 49.8% | 2.06ms | 15.3ms | 23.2ms | 26.2ms | github | GoogleDrive| | lightNet pruning | 1280x960 | 35.03 | 4.3M | int8 | 49.8% | 1.21ms | 8.89ms | 11.68ms | 13.75ms | github |GoogleDrive | | LightNet pruning +SemsegLight | 1280x960 | 44.35 | 4.9M | int8 | 49.8% | 1.80ms | 9.89ms | 15.26ms | 23.35ms | github | GoogleDrive|

- “DNN time” refers to the time measured by IProfiler during the enqueueV2 operation, excluding pre-process and post-process times.

- Orin NX has three independent AI processors, allowing lightNet to be parallelized across a GPU and two DLAs.

- Orin Nano 4GB has only iGPU with 512 CUDA cores.

Usage

Converting a LightNet model to a TensorRT engine

Build FP32 engine

$ ./lightNet-TRT --flagfile ../configs/lightNet-BDD100K-det-semaseg-1280x960.txt --precision kFLOAT

Build FP16(HALF) engine

$ ./lightNet-TRT --flagfile ../configs/lightNet-BDD100K-det-semaseg-1280x960.txt --precision kHALF

Build INT8 engine (You need to prepare a list for calibration in “configs/calibration_images.txt”.)

$ ./lightNet-TRT --flagfile ../configs/lightNet-BDD100K-det-semaseg-1280x960.txt --precision kINT8

File truncated at 100 lines see the full file

CONTRIBUTING

Repository Summary

| Description | LightNet-TRT is a high-efficiency and real-time implementation of convolutional neural networks (CNNs) using Edge AI. |

| Checkout URI | https://github.com/daniel89710/lightnet-trt.git |

| VCS Type | git |

| VCS Version | main |

| Last Updated | 2023-10-03 |

| Dev Status | UNKNOWN |

| Released | UNRELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| lightnet_trt | 0.0.0 |

README

LightNet-TRT: High-Efficiency and Real-Time CNN Implementation on Edge AI

LightNet-TRT is a CNN implementation optimized for edge AI devices that combines the advantages of LightNet [1] and TensorRT [2]. LightNet is a lightweight and high-performance neural network framework designed for edge devices, while TensorRT is a high-performance deep learning inference engine developed by NVIDIA for optimizing and running deep learning models on GPUs. LightNet-TRT uses the Network Definition API provided by TensorRT to integrate LightNet into TensorRT, allowing it to run efficiently and in real-time on edge devices.

Key Improvements

2:4 Structured Sparsity

LightNet-TRT utilizes 2:4 structured sparsity [3] to further optimize the network. 2:4 structured sparsity means that two values must be zero in each contiguous block of four values, resulting in a 50% reduction in the number of weights. This technique allows the network to use fewer weights and computations while maintaining accuracy.

NVDLA Execution

LightNet-TRT also supports the execution of the neural network on the NVIDIA Deep Learning Accelerator (NVDLA) [4] , a free and open architecture that provides high performance and low power consumption for deep learning inference on edge devices. By using NVDLA, LightNet-TRT can further improve the efficiency and performance of the network on edge devices.

Multi-Precision Quantization

In addition to post training quantization [5], LightNet-TRT also supports multi-precision quantization, which allows the network to use different precision for weights and activations. By using mixed precision, LightNet-TRT can further reduce the memory usage and computational requirements of the network while still maintaining accuracy. By writing it in CFG, you can set the precision for each layer of your CNN.

Multitask Execution (Detection/Segmentation)

LightNet-TRT also supports multitask execution, allowing the network to perform both object detection and segmentation tasks simultaneously. This enables the network to perform multiple tasks efficiently on edge devices, saving computational resources and power.

Installation

Requirements

- CUDA 11.0 or higher

- TensorRT 8.0 or higher

- OpenCV 3.0 or higher

Steps

- Clone the repository.

$ git clone https://github.com/daniel89710/lightNet-TRT.git

$ cd lightNet-TRT

- Install libraries.

$ sudo apt update

$ sudo apt install libgflags-dev

$ sudo apt install libboost-all-dev

- Compile the TensorRT implementation.

$ mkdir build

$ cmake ../

$ make -j

Model

| Model | Resolutions | GFLOPS | Params | Precision | Sparsity | DNN time on RTX3080 | DNN time on Jetson Orin NX 16GB GPU | DNN time on Jetson Orin NX 16GB DLA| DNN time on Jetson Orin Nano 4GB GPU | cfg | weights | |—|—|—|—|—|—|—|—|—|—|—|—| | lightNet | 1280x960 | 58.01 | 9.0M | int8 | 49.8% | 1.30ms | 7.6ms | 14.2ms | 14.9ms | github |GoogleDrive | | LightNet+Semseg | 1280x960 | 76.61 | 9.7M | int8 | 49.8% | 2.06ms | 15.3ms | 23.2ms | 26.2ms | github | GoogleDrive| | lightNet pruning | 1280x960 | 35.03 | 4.3M | int8 | 49.8% | 1.21ms | 8.89ms | 11.68ms | 13.75ms | github |GoogleDrive | | LightNet pruning +SemsegLight | 1280x960 | 44.35 | 4.9M | int8 | 49.8% | 1.80ms | 9.89ms | 15.26ms | 23.35ms | github | GoogleDrive|

- “DNN time” refers to the time measured by IProfiler during the enqueueV2 operation, excluding pre-process and post-process times.

- Orin NX has three independent AI processors, allowing lightNet to be parallelized across a GPU and two DLAs.

- Orin Nano 4GB has only iGPU with 512 CUDA cores.

Usage

Converting a LightNet model to a TensorRT engine

Build FP32 engine

$ ./lightNet-TRT --flagfile ../configs/lightNet-BDD100K-det-semaseg-1280x960.txt --precision kFLOAT

Build FP16(HALF) engine

$ ./lightNet-TRT --flagfile ../configs/lightNet-BDD100K-det-semaseg-1280x960.txt --precision kHALF

Build INT8 engine (You need to prepare a list for calibration in “configs/calibration_images.txt”.)

$ ./lightNet-TRT --flagfile ../configs/lightNet-BDD100K-det-semaseg-1280x960.txt --precision kINT8

File truncated at 100 lines see the full file

CONTRIBUTING

Repository Summary

| Description | LightNet-TRT is a high-efficiency and real-time implementation of convolutional neural networks (CNNs) using Edge AI. |

| Checkout URI | https://github.com/daniel89710/lightnet-trt.git |

| VCS Type | git |

| VCS Version | main |

| Last Updated | 2023-10-03 |

| Dev Status | UNKNOWN |

| Released | UNRELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| lightnet_trt | 0.0.0 |

README

LightNet-TRT: High-Efficiency and Real-Time CNN Implementation on Edge AI

LightNet-TRT is a CNN implementation optimized for edge AI devices that combines the advantages of LightNet [1] and TensorRT [2]. LightNet is a lightweight and high-performance neural network framework designed for edge devices, while TensorRT is a high-performance deep learning inference engine developed by NVIDIA for optimizing and running deep learning models on GPUs. LightNet-TRT uses the Network Definition API provided by TensorRT to integrate LightNet into TensorRT, allowing it to run efficiently and in real-time on edge devices.

Key Improvements

2:4 Structured Sparsity

LightNet-TRT utilizes 2:4 structured sparsity [3] to further optimize the network. 2:4 structured sparsity means that two values must be zero in each contiguous block of four values, resulting in a 50% reduction in the number of weights. This technique allows the network to use fewer weights and computations while maintaining accuracy.

NVDLA Execution

LightNet-TRT also supports the execution of the neural network on the NVIDIA Deep Learning Accelerator (NVDLA) [4] , a free and open architecture that provides high performance and low power consumption for deep learning inference on edge devices. By using NVDLA, LightNet-TRT can further improve the efficiency and performance of the network on edge devices.

Multi-Precision Quantization

In addition to post training quantization [5], LightNet-TRT also supports multi-precision quantization, which allows the network to use different precision for weights and activations. By using mixed precision, LightNet-TRT can further reduce the memory usage and computational requirements of the network while still maintaining accuracy. By writing it in CFG, you can set the precision for each layer of your CNN.

Multitask Execution (Detection/Segmentation)

LightNet-TRT also supports multitask execution, allowing the network to perform both object detection and segmentation tasks simultaneously. This enables the network to perform multiple tasks efficiently on edge devices, saving computational resources and power.

Installation

Requirements

- CUDA 11.0 or higher

- TensorRT 8.0 or higher

- OpenCV 3.0 or higher

Steps

- Clone the repository.

$ git clone https://github.com/daniel89710/lightNet-TRT.git

$ cd lightNet-TRT

- Install libraries.

$ sudo apt update

$ sudo apt install libgflags-dev

$ sudo apt install libboost-all-dev

- Compile the TensorRT implementation.

$ mkdir build

$ cmake ../

$ make -j

Model

| Model | Resolutions | GFLOPS | Params | Precision | Sparsity | DNN time on RTX3080 | DNN time on Jetson Orin NX 16GB GPU | DNN time on Jetson Orin NX 16GB DLA| DNN time on Jetson Orin Nano 4GB GPU | cfg | weights | |—|—|—|—|—|—|—|—|—|—|—|—| | lightNet | 1280x960 | 58.01 | 9.0M | int8 | 49.8% | 1.30ms | 7.6ms | 14.2ms | 14.9ms | github |GoogleDrive | | LightNet+Semseg | 1280x960 | 76.61 | 9.7M | int8 | 49.8% | 2.06ms | 15.3ms | 23.2ms | 26.2ms | github | GoogleDrive| | lightNet pruning | 1280x960 | 35.03 | 4.3M | int8 | 49.8% | 1.21ms | 8.89ms | 11.68ms | 13.75ms | github |GoogleDrive | | LightNet pruning +SemsegLight | 1280x960 | 44.35 | 4.9M | int8 | 49.8% | 1.80ms | 9.89ms | 15.26ms | 23.35ms | github | GoogleDrive|

- “DNN time” refers to the time measured by IProfiler during the enqueueV2 operation, excluding pre-process and post-process times.

- Orin NX has three independent AI processors, allowing lightNet to be parallelized across a GPU and two DLAs.

- Orin Nano 4GB has only iGPU with 512 CUDA cores.

Usage

Converting a LightNet model to a TensorRT engine

Build FP32 engine

$ ./lightNet-TRT --flagfile ../configs/lightNet-BDD100K-det-semaseg-1280x960.txt --precision kFLOAT

Build FP16(HALF) engine

$ ./lightNet-TRT --flagfile ../configs/lightNet-BDD100K-det-semaseg-1280x960.txt --precision kHALF

Build INT8 engine (You need to prepare a list for calibration in “configs/calibration_images.txt”.)

$ ./lightNet-TRT --flagfile ../configs/lightNet-BDD100K-det-semaseg-1280x960.txt --precision kINT8

File truncated at 100 lines see the full file

CONTRIBUTING

Repository Summary

| Description | LightNet-TRT is a high-efficiency and real-time implementation of convolutional neural networks (CNNs) using Edge AI. |

| Checkout URI | https://github.com/daniel89710/lightnet-trt.git |

| VCS Type | git |

| VCS Version | main |

| Last Updated | 2023-10-03 |

| Dev Status | UNKNOWN |

| Released | UNRELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| lightnet_trt | 0.0.0 |

README

LightNet-TRT: High-Efficiency and Real-Time CNN Implementation on Edge AI

LightNet-TRT is a CNN implementation optimized for edge AI devices that combines the advantages of LightNet [1] and TensorRT [2]. LightNet is a lightweight and high-performance neural network framework designed for edge devices, while TensorRT is a high-performance deep learning inference engine developed by NVIDIA for optimizing and running deep learning models on GPUs. LightNet-TRT uses the Network Definition API provided by TensorRT to integrate LightNet into TensorRT, allowing it to run efficiently and in real-time on edge devices.

Key Improvements

2:4 Structured Sparsity

LightNet-TRT utilizes 2:4 structured sparsity [3] to further optimize the network. 2:4 structured sparsity means that two values must be zero in each contiguous block of four values, resulting in a 50% reduction in the number of weights. This technique allows the network to use fewer weights and computations while maintaining accuracy.

NVDLA Execution

LightNet-TRT also supports the execution of the neural network on the NVIDIA Deep Learning Accelerator (NVDLA) [4] , a free and open architecture that provides high performance and low power consumption for deep learning inference on edge devices. By using NVDLA, LightNet-TRT can further improve the efficiency and performance of the network on edge devices.

Multi-Precision Quantization

In addition to post training quantization [5], LightNet-TRT also supports multi-precision quantization, which allows the network to use different precision for weights and activations. By using mixed precision, LightNet-TRT can further reduce the memory usage and computational requirements of the network while still maintaining accuracy. By writing it in CFG, you can set the precision for each layer of your CNN.

Multitask Execution (Detection/Segmentation)

LightNet-TRT also supports multitask execution, allowing the network to perform both object detection and segmentation tasks simultaneously. This enables the network to perform multiple tasks efficiently on edge devices, saving computational resources and power.

Installation

Requirements

- CUDA 11.0 or higher

- TensorRT 8.0 or higher

- OpenCV 3.0 or higher

Steps

- Clone the repository.

$ git clone https://github.com/daniel89710/lightNet-TRT.git

$ cd lightNet-TRT

- Install libraries.

$ sudo apt update

$ sudo apt install libgflags-dev

$ sudo apt install libboost-all-dev

- Compile the TensorRT implementation.

$ mkdir build

$ cmake ../

$ make -j

Model

| Model | Resolutions | GFLOPS | Params | Precision | Sparsity | DNN time on RTX3080 | DNN time on Jetson Orin NX 16GB GPU | DNN time on Jetson Orin NX 16GB DLA| DNN time on Jetson Orin Nano 4GB GPU | cfg | weights | |—|—|—|—|—|—|—|—|—|—|—|—| | lightNet | 1280x960 | 58.01 | 9.0M | int8 | 49.8% | 1.30ms | 7.6ms | 14.2ms | 14.9ms | github |GoogleDrive | | LightNet+Semseg | 1280x960 | 76.61 | 9.7M | int8 | 49.8% | 2.06ms | 15.3ms | 23.2ms | 26.2ms | github | GoogleDrive| | lightNet pruning | 1280x960 | 35.03 | 4.3M | int8 | 49.8% | 1.21ms | 8.89ms | 11.68ms | 13.75ms | github |GoogleDrive | | LightNet pruning +SemsegLight | 1280x960 | 44.35 | 4.9M | int8 | 49.8% | 1.80ms | 9.89ms | 15.26ms | 23.35ms | github | GoogleDrive|

- “DNN time” refers to the time measured by IProfiler during the enqueueV2 operation, excluding pre-process and post-process times.

- Orin NX has three independent AI processors, allowing lightNet to be parallelized across a GPU and two DLAs.

- Orin Nano 4GB has only iGPU with 512 CUDA cores.

Usage

Converting a LightNet model to a TensorRT engine

Build FP32 engine

$ ./lightNet-TRT --flagfile ../configs/lightNet-BDD100K-det-semaseg-1280x960.txt --precision kFLOAT

Build FP16(HALF) engine

$ ./lightNet-TRT --flagfile ../configs/lightNet-BDD100K-det-semaseg-1280x960.txt --precision kHALF

Build INT8 engine (You need to prepare a list for calibration in “configs/calibration_images.txt”.)

$ ./lightNet-TRT --flagfile ../configs/lightNet-BDD100K-det-semaseg-1280x960.txt --precision kINT8

File truncated at 100 lines see the full file

CONTRIBUTING

Repository Summary

| Description | LightNet-TRT is a high-efficiency and real-time implementation of convolutional neural networks (CNNs) using Edge AI. |

| Checkout URI | https://github.com/daniel89710/lightnet-trt.git |

| VCS Type | git |

| VCS Version | main |

| Last Updated | 2023-10-03 |

| Dev Status | UNKNOWN |

| Released | UNRELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| lightnet_trt | 0.0.0 |

README

LightNet-TRT: High-Efficiency and Real-Time CNN Implementation on Edge AI

LightNet-TRT is a CNN implementation optimized for edge AI devices that combines the advantages of LightNet [1] and TensorRT [2]. LightNet is a lightweight and high-performance neural network framework designed for edge devices, while TensorRT is a high-performance deep learning inference engine developed by NVIDIA for optimizing and running deep learning models on GPUs. LightNet-TRT uses the Network Definition API provided by TensorRT to integrate LightNet into TensorRT, allowing it to run efficiently and in real-time on edge devices.

Key Improvements

2:4 Structured Sparsity

LightNet-TRT utilizes 2:4 structured sparsity [3] to further optimize the network. 2:4 structured sparsity means that two values must be zero in each contiguous block of four values, resulting in a 50% reduction in the number of weights. This technique allows the network to use fewer weights and computations while maintaining accuracy.

NVDLA Execution

LightNet-TRT also supports the execution of the neural network on the NVIDIA Deep Learning Accelerator (NVDLA) [4] , a free and open architecture that provides high performance and low power consumption for deep learning inference on edge devices. By using NVDLA, LightNet-TRT can further improve the efficiency and performance of the network on edge devices.

Multi-Precision Quantization

In addition to post training quantization [5], LightNet-TRT also supports multi-precision quantization, which allows the network to use different precision for weights and activations. By using mixed precision, LightNet-TRT can further reduce the memory usage and computational requirements of the network while still maintaining accuracy. By writing it in CFG, you can set the precision for each layer of your CNN.

Multitask Execution (Detection/Segmentation)

LightNet-TRT also supports multitask execution, allowing the network to perform both object detection and segmentation tasks simultaneously. This enables the network to perform multiple tasks efficiently on edge devices, saving computational resources and power.

Installation

Requirements

- CUDA 11.0 or higher

- TensorRT 8.0 or higher

- OpenCV 3.0 or higher

Steps

- Clone the repository.

$ git clone https://github.com/daniel89710/lightNet-TRT.git

$ cd lightNet-TRT

- Install libraries.

$ sudo apt update

$ sudo apt install libgflags-dev

$ sudo apt install libboost-all-dev

- Compile the TensorRT implementation.

$ mkdir build

$ cmake ../

$ make -j

Model

| Model | Resolutions | GFLOPS | Params | Precision | Sparsity | DNN time on RTX3080 | DNN time on Jetson Orin NX 16GB GPU | DNN time on Jetson Orin NX 16GB DLA| DNN time on Jetson Orin Nano 4GB GPU | cfg | weights | |—|—|—|—|—|—|—|—|—|—|—|—| | lightNet | 1280x960 | 58.01 | 9.0M | int8 | 49.8% | 1.30ms | 7.6ms | 14.2ms | 14.9ms | github |GoogleDrive | | LightNet+Semseg | 1280x960 | 76.61 | 9.7M | int8 | 49.8% | 2.06ms | 15.3ms | 23.2ms | 26.2ms | github | GoogleDrive| | lightNet pruning | 1280x960 | 35.03 | 4.3M | int8 | 49.8% | 1.21ms | 8.89ms | 11.68ms | 13.75ms | github |GoogleDrive | | LightNet pruning +SemsegLight | 1280x960 | 44.35 | 4.9M | int8 | 49.8% | 1.80ms | 9.89ms | 15.26ms | 23.35ms | github | GoogleDrive|

- “DNN time” refers to the time measured by IProfiler during the enqueueV2 operation, excluding pre-process and post-process times.

- Orin NX has three independent AI processors, allowing lightNet to be parallelized across a GPU and two DLAs.

- Orin Nano 4GB has only iGPU with 512 CUDA cores.

Usage

Converting a LightNet model to a TensorRT engine

Build FP32 engine

$ ./lightNet-TRT --flagfile ../configs/lightNet-BDD100K-det-semaseg-1280x960.txt --precision kFLOAT

Build FP16(HALF) engine

$ ./lightNet-TRT --flagfile ../configs/lightNet-BDD100K-det-semaseg-1280x960.txt --precision kHALF

Build INT8 engine (You need to prepare a list for calibration in “configs/calibration_images.txt”.)

$ ./lightNet-TRT --flagfile ../configs/lightNet-BDD100K-det-semaseg-1280x960.txt --precision kINT8

File truncated at 100 lines see the full file

CONTRIBUTING

Repository Summary

| Description | LightNet-TRT is a high-efficiency and real-time implementation of convolutional neural networks (CNNs) using Edge AI. |

| Checkout URI | https://github.com/daniel89710/lightnet-trt.git |

| VCS Type | git |

| VCS Version | main |

| Last Updated | 2023-10-03 |

| Dev Status | UNKNOWN |

| Released | UNRELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| lightnet_trt | 0.0.0 |

README

LightNet-TRT: High-Efficiency and Real-Time CNN Implementation on Edge AI

LightNet-TRT is a CNN implementation optimized for edge AI devices that combines the advantages of LightNet [1] and TensorRT [2]. LightNet is a lightweight and high-performance neural network framework designed for edge devices, while TensorRT is a high-performance deep learning inference engine developed by NVIDIA for optimizing and running deep learning models on GPUs. LightNet-TRT uses the Network Definition API provided by TensorRT to integrate LightNet into TensorRT, allowing it to run efficiently and in real-time on edge devices.

Key Improvements

2:4 Structured Sparsity

LightNet-TRT utilizes 2:4 structured sparsity [3] to further optimize the network. 2:4 structured sparsity means that two values must be zero in each contiguous block of four values, resulting in a 50% reduction in the number of weights. This technique allows the network to use fewer weights and computations while maintaining accuracy.

NVDLA Execution

LightNet-TRT also supports the execution of the neural network on the NVIDIA Deep Learning Accelerator (NVDLA) [4] , a free and open architecture that provides high performance and low power consumption for deep learning inference on edge devices. By using NVDLA, LightNet-TRT can further improve the efficiency and performance of the network on edge devices.

Multi-Precision Quantization

In addition to post training quantization [5], LightNet-TRT also supports multi-precision quantization, which allows the network to use different precision for weights and activations. By using mixed precision, LightNet-TRT can further reduce the memory usage and computational requirements of the network while still maintaining accuracy. By writing it in CFG, you can set the precision for each layer of your CNN.

Multitask Execution (Detection/Segmentation)

LightNet-TRT also supports multitask execution, allowing the network to perform both object detection and segmentation tasks simultaneously. This enables the network to perform multiple tasks efficiently on edge devices, saving computational resources and power.

Installation

Requirements

- CUDA 11.0 or higher

- TensorRT 8.0 or higher

- OpenCV 3.0 or higher

Steps

- Clone the repository.

$ git clone https://github.com/daniel89710/lightNet-TRT.git

$ cd lightNet-TRT

- Install libraries.

$ sudo apt update

$ sudo apt install libgflags-dev

$ sudo apt install libboost-all-dev

- Compile the TensorRT implementation.

$ mkdir build

$ cmake ../

$ make -j

Model

| Model | Resolutions | GFLOPS | Params | Precision | Sparsity | DNN time on RTX3080 | DNN time on Jetson Orin NX 16GB GPU | DNN time on Jetson Orin NX 16GB DLA| DNN time on Jetson Orin Nano 4GB GPU | cfg | weights | |—|—|—|—|—|—|—|—|—|—|—|—| | lightNet | 1280x960 | 58.01 | 9.0M | int8 | 49.8% | 1.30ms | 7.6ms | 14.2ms | 14.9ms | github |GoogleDrive | | LightNet+Semseg | 1280x960 | 76.61 | 9.7M | int8 | 49.8% | 2.06ms | 15.3ms | 23.2ms | 26.2ms | github | GoogleDrive| | lightNet pruning | 1280x960 | 35.03 | 4.3M | int8 | 49.8% | 1.21ms | 8.89ms | 11.68ms | 13.75ms | github |GoogleDrive | | LightNet pruning +SemsegLight | 1280x960 | 44.35 | 4.9M | int8 | 49.8% | 1.80ms | 9.89ms | 15.26ms | 23.35ms | github | GoogleDrive|

- “DNN time” refers to the time measured by IProfiler during the enqueueV2 operation, excluding pre-process and post-process times.

- Orin NX has three independent AI processors, allowing lightNet to be parallelized across a GPU and two DLAs.

- Orin Nano 4GB has only iGPU with 512 CUDA cores.

Usage

Converting a LightNet model to a TensorRT engine

Build FP32 engine

$ ./lightNet-TRT --flagfile ../configs/lightNet-BDD100K-det-semaseg-1280x960.txt --precision kFLOAT

Build FP16(HALF) engine

$ ./lightNet-TRT --flagfile ../configs/lightNet-BDD100K-det-semaseg-1280x960.txt --precision kHALF

Build INT8 engine (You need to prepare a list for calibration in “configs/calibration_images.txt”.)

$ ./lightNet-TRT --flagfile ../configs/lightNet-BDD100K-det-semaseg-1280x960.txt --precision kINT8

File truncated at 100 lines see the full file

CONTRIBUTING

Repository Summary

| Description | LightNet-TRT is a high-efficiency and real-time implementation of convolutional neural networks (CNNs) using Edge AI. |

| Checkout URI | https://github.com/daniel89710/lightnet-trt.git |

| VCS Type | git |

| VCS Version | main |

| Last Updated | 2023-10-03 |

| Dev Status | UNKNOWN |

| Released | UNRELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| lightnet_trt | 0.0.0 |

README

LightNet-TRT: High-Efficiency and Real-Time CNN Implementation on Edge AI

LightNet-TRT is a CNN implementation optimized for edge AI devices that combines the advantages of LightNet [1] and TensorRT [2]. LightNet is a lightweight and high-performance neural network framework designed for edge devices, while TensorRT is a high-performance deep learning inference engine developed by NVIDIA for optimizing and running deep learning models on GPUs. LightNet-TRT uses the Network Definition API provided by TensorRT to integrate LightNet into TensorRT, allowing it to run efficiently and in real-time on edge devices.

Key Improvements

2:4 Structured Sparsity

LightNet-TRT utilizes 2:4 structured sparsity [3] to further optimize the network. 2:4 structured sparsity means that two values must be zero in each contiguous block of four values, resulting in a 50% reduction in the number of weights. This technique allows the network to use fewer weights and computations while maintaining accuracy.

NVDLA Execution

LightNet-TRT also supports the execution of the neural network on the NVIDIA Deep Learning Accelerator (NVDLA) [4] , a free and open architecture that provides high performance and low power consumption for deep learning inference on edge devices. By using NVDLA, LightNet-TRT can further improve the efficiency and performance of the network on edge devices.

Multi-Precision Quantization

In addition to post training quantization [5], LightNet-TRT also supports multi-precision quantization, which allows the network to use different precision for weights and activations. By using mixed precision, LightNet-TRT can further reduce the memory usage and computational requirements of the network while still maintaining accuracy. By writing it in CFG, you can set the precision for each layer of your CNN.

Multitask Execution (Detection/Segmentation)

LightNet-TRT also supports multitask execution, allowing the network to perform both object detection and segmentation tasks simultaneously. This enables the network to perform multiple tasks efficiently on edge devices, saving computational resources and power.

Installation

Requirements

- CUDA 11.0 or higher

- TensorRT 8.0 or higher

- OpenCV 3.0 or higher

Steps

- Clone the repository.

$ git clone https://github.com/daniel89710/lightNet-TRT.git

$ cd lightNet-TRT

- Install libraries.

$ sudo apt update

$ sudo apt install libgflags-dev

$ sudo apt install libboost-all-dev

- Compile the TensorRT implementation.

$ mkdir build

$ cmake ../

$ make -j

Model

| Model | Resolutions | GFLOPS | Params | Precision | Sparsity | DNN time on RTX3080 | DNN time on Jetson Orin NX 16GB GPU | DNN time on Jetson Orin NX 16GB DLA| DNN time on Jetson Orin Nano 4GB GPU | cfg | weights | |—|—|—|—|—|—|—|—|—|—|—|—| | lightNet | 1280x960 | 58.01 | 9.0M | int8 | 49.8% | 1.30ms | 7.6ms | 14.2ms | 14.9ms | github |GoogleDrive | | LightNet+Semseg | 1280x960 | 76.61 | 9.7M | int8 | 49.8% | 2.06ms | 15.3ms | 23.2ms | 26.2ms | github | GoogleDrive| | lightNet pruning | 1280x960 | 35.03 | 4.3M | int8 | 49.8% | 1.21ms | 8.89ms | 11.68ms | 13.75ms | github |GoogleDrive | | LightNet pruning +SemsegLight | 1280x960 | 44.35 | 4.9M | int8 | 49.8% | 1.80ms | 9.89ms | 15.26ms | 23.35ms | github | GoogleDrive|

- “DNN time” refers to the time measured by IProfiler during the enqueueV2 operation, excluding pre-process and post-process times.

- Orin NX has three independent AI processors, allowing lightNet to be parallelized across a GPU and two DLAs.

- Orin Nano 4GB has only iGPU with 512 CUDA cores.

Usage

Converting a LightNet model to a TensorRT engine

Build FP32 engine

$ ./lightNet-TRT --flagfile ../configs/lightNet-BDD100K-det-semaseg-1280x960.txt --precision kFLOAT

Build FP16(HALF) engine

$ ./lightNet-TRT --flagfile ../configs/lightNet-BDD100K-det-semaseg-1280x960.txt --precision kHALF

Build INT8 engine (You need to prepare a list for calibration in “configs/calibration_images.txt”.)

$ ./lightNet-TRT --flagfile ../configs/lightNet-BDD100K-det-semaseg-1280x960.txt --precision kINT8

File truncated at 100 lines see the full file