Repository Summary

| Description | |

| Checkout URI | https://github.com/nvidia-ai-iot/ros2_tao_pointpillars.git |

| VCS Type | git |

| VCS Version | main |

| Last Updated | 2022-08-09 |

| Dev Status | UNKNOWN |

| Released | UNRELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| pp_infer | 0.0.0 |

README

ROS2 node for TAO-PointPillars

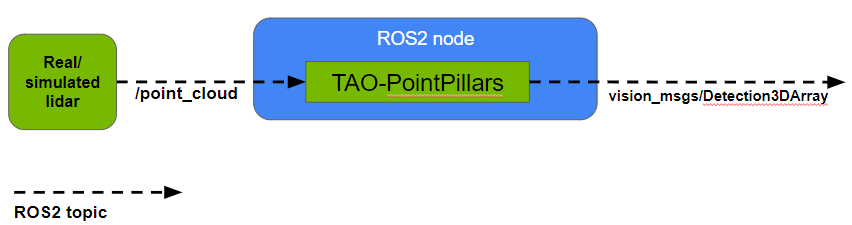

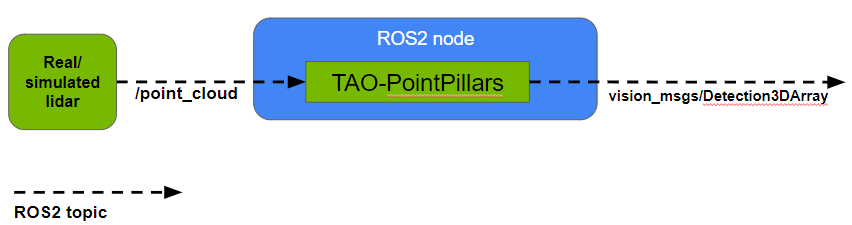

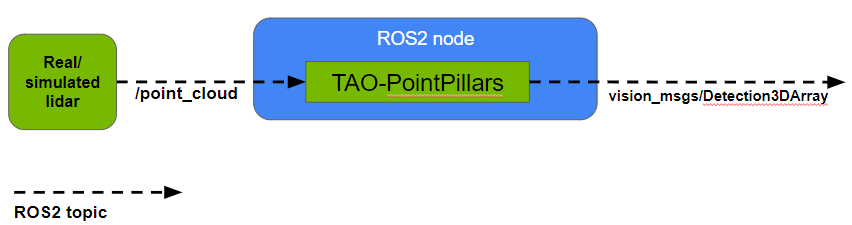

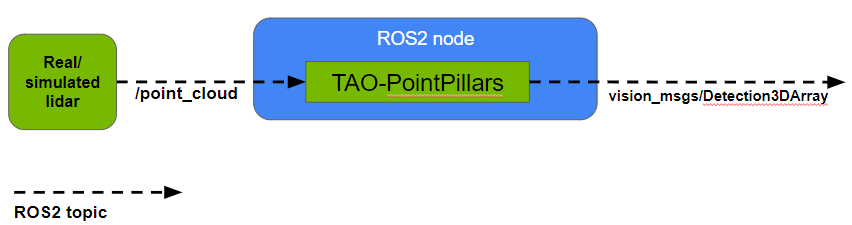

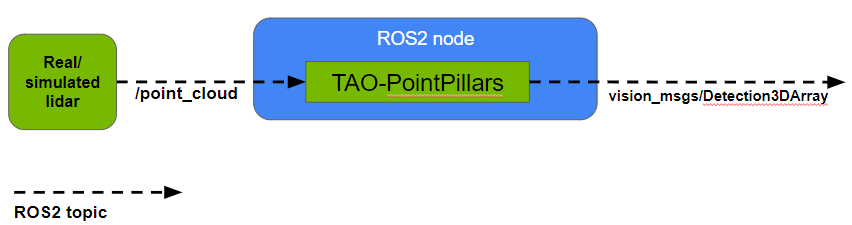

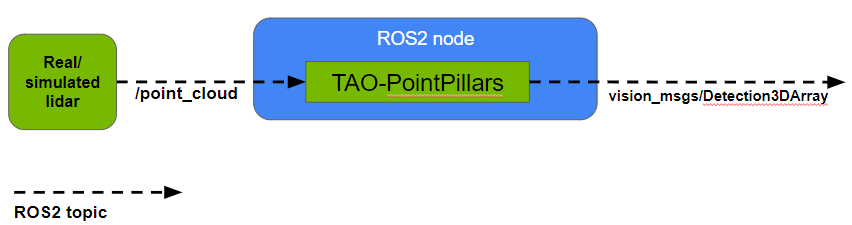

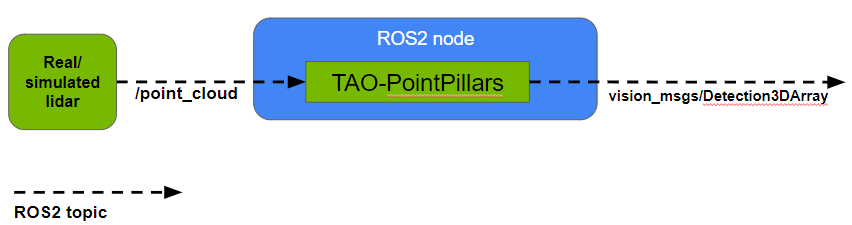

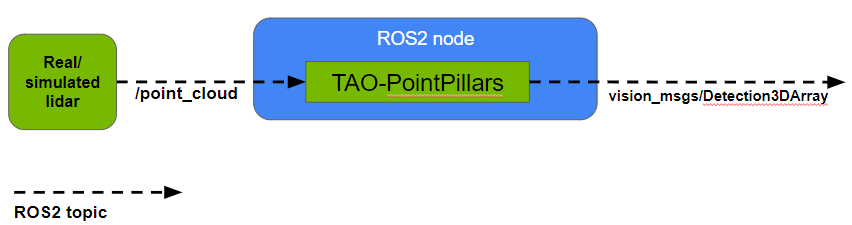

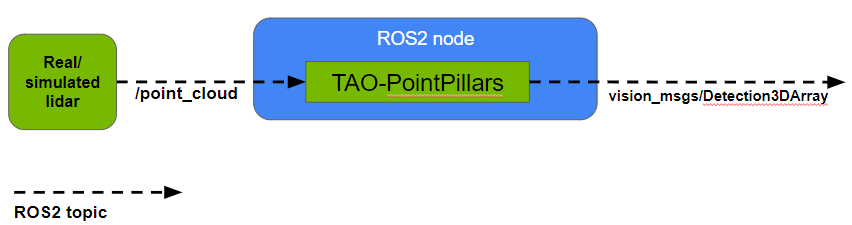

This is a ROS2 node for 3D object detection in point clouds using TAO-PointPillars for inference with TensorRT.

Node details:

- Input: Takes point cloud data in PointCloud2 format on the topic

/point_cloud. Each point in the data must contain 4 features - (x, y, z) position coordinates and intensity. ROS2 bags for testing the node, provided by Zvision, can be found here. - Output: Outputs inference results in Detection3DArray format on the topic

/bbox. This contains the class ID, score and 3D bounding box information of detected objects. - Inference model: You can train a model on your own dataset using NVIDIA TAO Toolkit following instructions here. We used a TensorRT engine generated from a TAO-PointPillars model trained to detect objects of 3 classes - Vehicle, Pedestrian and Cyclist.

Requirements

Tested on Ubuntu 20.04 and ROS2 Foxy.

- TensorRT 8.2(or above)

- TensorRT OSS 22.02 (see how to install below)

git clone -b 22.02 https://github.com/NVIDIA/TensorRT.git TensorRT

cd TensorRT

git submodule update --init --recursive

mkdir -p build && cd build

cmake .. -DCUDA_VERSION=$CUDA_VERSION -DGPU_ARCHS=$GPU_ARCHS

make nvinfer_plugin -j$(nproc)

make nvinfer_plugin_static -j$(nproc)

cp libnvinfer_plugin.so.8.2.* /usr/lib/$ARCH-linux-gnu/libnvinfer_plugin.so.8.2.3

cp libnvinfer_plugin_static.a /usr/lib/$ARCH-linux-gnu/libnvinfer_plugin_static.a

Usage

- This project assumes that you have already trained your model using NVIDIA TAO Toolkit and have an .etlt file. If not, please refer here for information on how to do this. The pre-trained PointPillars model used by this project can be found here.

- Use tao-converter to generate a TensorRT engine from your model. For instance:

tao-converter -k $KEY \

-e $USER_DIR/trt.engine \

-p points,1x204800x4,1x204800x4,1x204800x4 \

-p num_points,1,1,1 \

-t fp16 \

$USER_DIR/model.etlt

Argument definitions:

- -k: User-specific encoding key to save or load an etlt model.

- -e: Location where you want to store the resulting TensorRT engine.

- -p points: (N x P x 4), where N is the batch size, P is the maximum number of points in a point cloud file, 4 is the number of features per point.

- -p num_points: (N,), where N is the batch size as above.

- -t: Desired engine data type. The options are fp32 or fp16 (default value is fp32).

- Source your ROS2 environment:

source /opt/ros/foxy/setup.bash - Create a ROS2 workspace (more information can be found here):

mkdir -p pointpillars_ws/src

cd pointpillars_ws/src

Clone this repository in pointpillars_ws/src. The directory structure should look like this:

.

+- pointpillars_ws

+- src

+- CMakeLists.txt

+- package.xml

+- include

+- launch

+- src

- Resolve missing dependencies by running the following command from

pointpillars_ws:

rosdep install -i --from-path src --rosdistro foxy -y

-

Specify parameters including the path to your TensorRT engine in the launch file. Please see Modifying parameters in the launch file below for how to do this.

-

Build and source the package files:

colcon build --packages-select pp_infer

. install/setup.bash

- Run the node using the launch file:

ros2 launch pp_infer pp_infer_launch.py - Make sure data is being published on the /point_cloud topic. If your point cloud data is being published on a different topic name, you can remap it to /point_cloud (please see Modifying parameters in the launch file below). For good performance, point cloud input data should be from the same lidar and configuration that was used for training the model.

- Inference results will be published on the /bbox topic as Detection3DArray messages. Each Detection3DArray message has the following information:

- header: The time stamp and frame id following this format.

- detections: List of detected objects with following information for each:

- class ID

- score

- X, Y and Z coordinates of object bounding box center

- length, width and height of bounding box

- yaw (orientation) of bounding box in 3D Euclidean space

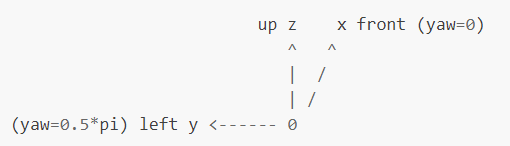

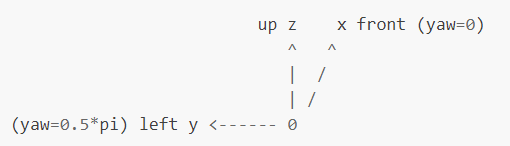

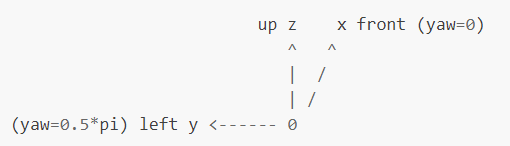

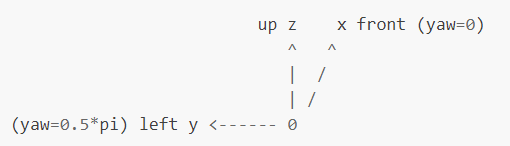

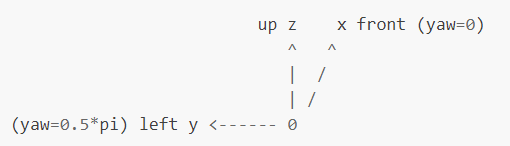

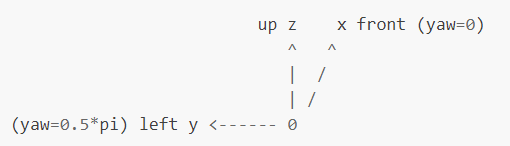

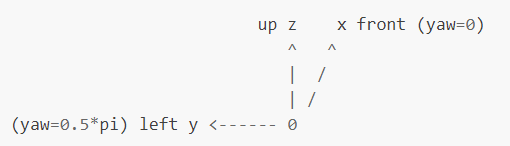

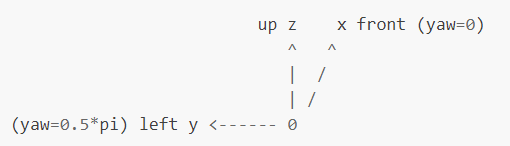

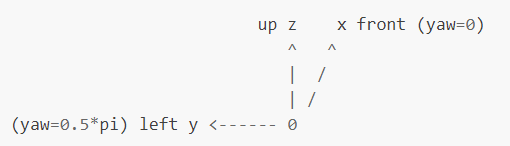

The resulting bounding box coordinates follow the coordinate system below with origin at the center of lidar:

CONTRIBUTING

Repository Summary

| Description | |

| Checkout URI | https://github.com/nvidia-ai-iot/ros2_tao_pointpillars.git |

| VCS Type | git |

| VCS Version | main |

| Last Updated | 2022-08-09 |

| Dev Status | UNKNOWN |

| Released | UNRELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| pp_infer | 0.0.0 |

README

ROS2 node for TAO-PointPillars

This is a ROS2 node for 3D object detection in point clouds using TAO-PointPillars for inference with TensorRT.

Node details:

- Input: Takes point cloud data in PointCloud2 format on the topic

/point_cloud. Each point in the data must contain 4 features - (x, y, z) position coordinates and intensity. ROS2 bags for testing the node, provided by Zvision, can be found here. - Output: Outputs inference results in Detection3DArray format on the topic

/bbox. This contains the class ID, score and 3D bounding box information of detected objects. - Inference model: You can train a model on your own dataset using NVIDIA TAO Toolkit following instructions here. We used a TensorRT engine generated from a TAO-PointPillars model trained to detect objects of 3 classes - Vehicle, Pedestrian and Cyclist.

Requirements

Tested on Ubuntu 20.04 and ROS2 Foxy.

- TensorRT 8.2(or above)

- TensorRT OSS 22.02 (see how to install below)

git clone -b 22.02 https://github.com/NVIDIA/TensorRT.git TensorRT

cd TensorRT

git submodule update --init --recursive

mkdir -p build && cd build

cmake .. -DCUDA_VERSION=$CUDA_VERSION -DGPU_ARCHS=$GPU_ARCHS

make nvinfer_plugin -j$(nproc)

make nvinfer_plugin_static -j$(nproc)

cp libnvinfer_plugin.so.8.2.* /usr/lib/$ARCH-linux-gnu/libnvinfer_plugin.so.8.2.3

cp libnvinfer_plugin_static.a /usr/lib/$ARCH-linux-gnu/libnvinfer_plugin_static.a

Usage

- This project assumes that you have already trained your model using NVIDIA TAO Toolkit and have an .etlt file. If not, please refer here for information on how to do this. The pre-trained PointPillars model used by this project can be found here.

- Use tao-converter to generate a TensorRT engine from your model. For instance:

tao-converter -k $KEY \

-e $USER_DIR/trt.engine \

-p points,1x204800x4,1x204800x4,1x204800x4 \

-p num_points,1,1,1 \

-t fp16 \

$USER_DIR/model.etlt

Argument definitions:

- -k: User-specific encoding key to save or load an etlt model.

- -e: Location where you want to store the resulting TensorRT engine.

- -p points: (N x P x 4), where N is the batch size, P is the maximum number of points in a point cloud file, 4 is the number of features per point.

- -p num_points: (N,), where N is the batch size as above.

- -t: Desired engine data type. The options are fp32 or fp16 (default value is fp32).

- Source your ROS2 environment:

source /opt/ros/foxy/setup.bash - Create a ROS2 workspace (more information can be found here):

mkdir -p pointpillars_ws/src

cd pointpillars_ws/src

Clone this repository in pointpillars_ws/src. The directory structure should look like this:

.

+- pointpillars_ws

+- src

+- CMakeLists.txt

+- package.xml

+- include

+- launch

+- src

- Resolve missing dependencies by running the following command from

pointpillars_ws:

rosdep install -i --from-path src --rosdistro foxy -y

-

Specify parameters including the path to your TensorRT engine in the launch file. Please see Modifying parameters in the launch file below for how to do this.

-

Build and source the package files:

colcon build --packages-select pp_infer

. install/setup.bash

- Run the node using the launch file:

ros2 launch pp_infer pp_infer_launch.py - Make sure data is being published on the /point_cloud topic. If your point cloud data is being published on a different topic name, you can remap it to /point_cloud (please see Modifying parameters in the launch file below). For good performance, point cloud input data should be from the same lidar and configuration that was used for training the model.

- Inference results will be published on the /bbox topic as Detection3DArray messages. Each Detection3DArray message has the following information:

- header: The time stamp and frame id following this format.

- detections: List of detected objects with following information for each:

- class ID

- score

- X, Y and Z coordinates of object bounding box center

- length, width and height of bounding box

- yaw (orientation) of bounding box in 3D Euclidean space

The resulting bounding box coordinates follow the coordinate system below with origin at the center of lidar:

CONTRIBUTING

Repository Summary

| Description | |

| Checkout URI | https://github.com/nvidia-ai-iot/ros2_tao_pointpillars.git |

| VCS Type | git |

| VCS Version | main |

| Last Updated | 2022-08-09 |

| Dev Status | UNKNOWN |

| Released | UNRELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| pp_infer | 0.0.0 |

README

ROS2 node for TAO-PointPillars

This is a ROS2 node for 3D object detection in point clouds using TAO-PointPillars for inference with TensorRT.

Node details:

- Input: Takes point cloud data in PointCloud2 format on the topic

/point_cloud. Each point in the data must contain 4 features - (x, y, z) position coordinates and intensity. ROS2 bags for testing the node, provided by Zvision, can be found here. - Output: Outputs inference results in Detection3DArray format on the topic

/bbox. This contains the class ID, score and 3D bounding box information of detected objects. - Inference model: You can train a model on your own dataset using NVIDIA TAO Toolkit following instructions here. We used a TensorRT engine generated from a TAO-PointPillars model trained to detect objects of 3 classes - Vehicle, Pedestrian and Cyclist.

Requirements

Tested on Ubuntu 20.04 and ROS2 Foxy.

- TensorRT 8.2(or above)

- TensorRT OSS 22.02 (see how to install below)

git clone -b 22.02 https://github.com/NVIDIA/TensorRT.git TensorRT

cd TensorRT

git submodule update --init --recursive

mkdir -p build && cd build

cmake .. -DCUDA_VERSION=$CUDA_VERSION -DGPU_ARCHS=$GPU_ARCHS

make nvinfer_plugin -j$(nproc)

make nvinfer_plugin_static -j$(nproc)

cp libnvinfer_plugin.so.8.2.* /usr/lib/$ARCH-linux-gnu/libnvinfer_plugin.so.8.2.3

cp libnvinfer_plugin_static.a /usr/lib/$ARCH-linux-gnu/libnvinfer_plugin_static.a

Usage

- This project assumes that you have already trained your model using NVIDIA TAO Toolkit and have an .etlt file. If not, please refer here for information on how to do this. The pre-trained PointPillars model used by this project can be found here.

- Use tao-converter to generate a TensorRT engine from your model. For instance:

tao-converter -k $KEY \

-e $USER_DIR/trt.engine \

-p points,1x204800x4,1x204800x4,1x204800x4 \

-p num_points,1,1,1 \

-t fp16 \

$USER_DIR/model.etlt

Argument definitions:

- -k: User-specific encoding key to save or load an etlt model.

- -e: Location where you want to store the resulting TensorRT engine.

- -p points: (N x P x 4), where N is the batch size, P is the maximum number of points in a point cloud file, 4 is the number of features per point.

- -p num_points: (N,), where N is the batch size as above.

- -t: Desired engine data type. The options are fp32 or fp16 (default value is fp32).

- Source your ROS2 environment:

source /opt/ros/foxy/setup.bash - Create a ROS2 workspace (more information can be found here):

mkdir -p pointpillars_ws/src

cd pointpillars_ws/src

Clone this repository in pointpillars_ws/src. The directory structure should look like this:

.

+- pointpillars_ws

+- src

+- CMakeLists.txt

+- package.xml

+- include

+- launch

+- src

- Resolve missing dependencies by running the following command from

pointpillars_ws:

rosdep install -i --from-path src --rosdistro foxy -y

-

Specify parameters including the path to your TensorRT engine in the launch file. Please see Modifying parameters in the launch file below for how to do this.

-

Build and source the package files:

colcon build --packages-select pp_infer

. install/setup.bash

- Run the node using the launch file:

ros2 launch pp_infer pp_infer_launch.py - Make sure data is being published on the /point_cloud topic. If your point cloud data is being published on a different topic name, you can remap it to /point_cloud (please see Modifying parameters in the launch file below). For good performance, point cloud input data should be from the same lidar and configuration that was used for training the model.

- Inference results will be published on the /bbox topic as Detection3DArray messages. Each Detection3DArray message has the following information:

- header: The time stamp and frame id following this format.

- detections: List of detected objects with following information for each:

- class ID

- score

- X, Y and Z coordinates of object bounding box center

- length, width and height of bounding box

- yaw (orientation) of bounding box in 3D Euclidean space

The resulting bounding box coordinates follow the coordinate system below with origin at the center of lidar:

CONTRIBUTING

Repository Summary

| Description | |

| Checkout URI | https://github.com/nvidia-ai-iot/ros2_tao_pointpillars.git |

| VCS Type | git |

| VCS Version | main |

| Last Updated | 2022-08-09 |

| Dev Status | UNKNOWN |

| Released | UNRELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| pp_infer | 0.0.0 |

README

ROS2 node for TAO-PointPillars

This is a ROS2 node for 3D object detection in point clouds using TAO-PointPillars for inference with TensorRT.

Node details:

- Input: Takes point cloud data in PointCloud2 format on the topic

/point_cloud. Each point in the data must contain 4 features - (x, y, z) position coordinates and intensity. ROS2 bags for testing the node, provided by Zvision, can be found here. - Output: Outputs inference results in Detection3DArray format on the topic

/bbox. This contains the class ID, score and 3D bounding box information of detected objects. - Inference model: You can train a model on your own dataset using NVIDIA TAO Toolkit following instructions here. We used a TensorRT engine generated from a TAO-PointPillars model trained to detect objects of 3 classes - Vehicle, Pedestrian and Cyclist.

Requirements

Tested on Ubuntu 20.04 and ROS2 Foxy.

- TensorRT 8.2(or above)

- TensorRT OSS 22.02 (see how to install below)

git clone -b 22.02 https://github.com/NVIDIA/TensorRT.git TensorRT

cd TensorRT

git submodule update --init --recursive

mkdir -p build && cd build

cmake .. -DCUDA_VERSION=$CUDA_VERSION -DGPU_ARCHS=$GPU_ARCHS

make nvinfer_plugin -j$(nproc)

make nvinfer_plugin_static -j$(nproc)

cp libnvinfer_plugin.so.8.2.* /usr/lib/$ARCH-linux-gnu/libnvinfer_plugin.so.8.2.3

cp libnvinfer_plugin_static.a /usr/lib/$ARCH-linux-gnu/libnvinfer_plugin_static.a

Usage

- This project assumes that you have already trained your model using NVIDIA TAO Toolkit and have an .etlt file. If not, please refer here for information on how to do this. The pre-trained PointPillars model used by this project can be found here.

- Use tao-converter to generate a TensorRT engine from your model. For instance:

tao-converter -k $KEY \

-e $USER_DIR/trt.engine \

-p points,1x204800x4,1x204800x4,1x204800x4 \

-p num_points,1,1,1 \

-t fp16 \

$USER_DIR/model.etlt

Argument definitions:

- -k: User-specific encoding key to save or load an etlt model.

- -e: Location where you want to store the resulting TensorRT engine.

- -p points: (N x P x 4), where N is the batch size, P is the maximum number of points in a point cloud file, 4 is the number of features per point.

- -p num_points: (N,), where N is the batch size as above.

- -t: Desired engine data type. The options are fp32 or fp16 (default value is fp32).

- Source your ROS2 environment:

source /opt/ros/foxy/setup.bash - Create a ROS2 workspace (more information can be found here):

mkdir -p pointpillars_ws/src

cd pointpillars_ws/src

Clone this repository in pointpillars_ws/src. The directory structure should look like this:

.

+- pointpillars_ws

+- src

+- CMakeLists.txt

+- package.xml

+- include

+- launch

+- src

- Resolve missing dependencies by running the following command from

pointpillars_ws:

rosdep install -i --from-path src --rosdistro foxy -y

-

Specify parameters including the path to your TensorRT engine in the launch file. Please see Modifying parameters in the launch file below for how to do this.

-

Build and source the package files:

colcon build --packages-select pp_infer

. install/setup.bash

- Run the node using the launch file:

ros2 launch pp_infer pp_infer_launch.py - Make sure data is being published on the /point_cloud topic. If your point cloud data is being published on a different topic name, you can remap it to /point_cloud (please see Modifying parameters in the launch file below). For good performance, point cloud input data should be from the same lidar and configuration that was used for training the model.

- Inference results will be published on the /bbox topic as Detection3DArray messages. Each Detection3DArray message has the following information:

- header: The time stamp and frame id following this format.

- detections: List of detected objects with following information for each:

- class ID

- score

- X, Y and Z coordinates of object bounding box center

- length, width and height of bounding box

- yaw (orientation) of bounding box in 3D Euclidean space

The resulting bounding box coordinates follow the coordinate system below with origin at the center of lidar:

CONTRIBUTING

Repository Summary

| Description | |

| Checkout URI | https://github.com/nvidia-ai-iot/ros2_tao_pointpillars.git |

| VCS Type | git |

| VCS Version | main |

| Last Updated | 2022-08-09 |

| Dev Status | UNKNOWN |

| Released | UNRELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| pp_infer | 0.0.0 |

README

ROS2 node for TAO-PointPillars

This is a ROS2 node for 3D object detection in point clouds using TAO-PointPillars for inference with TensorRT.

Node details:

- Input: Takes point cloud data in PointCloud2 format on the topic

/point_cloud. Each point in the data must contain 4 features - (x, y, z) position coordinates and intensity. ROS2 bags for testing the node, provided by Zvision, can be found here. - Output: Outputs inference results in Detection3DArray format on the topic

/bbox. This contains the class ID, score and 3D bounding box information of detected objects. - Inference model: You can train a model on your own dataset using NVIDIA TAO Toolkit following instructions here. We used a TensorRT engine generated from a TAO-PointPillars model trained to detect objects of 3 classes - Vehicle, Pedestrian and Cyclist.

Requirements

Tested on Ubuntu 20.04 and ROS2 Foxy.

- TensorRT 8.2(or above)

- TensorRT OSS 22.02 (see how to install below)

git clone -b 22.02 https://github.com/NVIDIA/TensorRT.git TensorRT

cd TensorRT

git submodule update --init --recursive

mkdir -p build && cd build

cmake .. -DCUDA_VERSION=$CUDA_VERSION -DGPU_ARCHS=$GPU_ARCHS

make nvinfer_plugin -j$(nproc)

make nvinfer_plugin_static -j$(nproc)

cp libnvinfer_plugin.so.8.2.* /usr/lib/$ARCH-linux-gnu/libnvinfer_plugin.so.8.2.3

cp libnvinfer_plugin_static.a /usr/lib/$ARCH-linux-gnu/libnvinfer_plugin_static.a

Usage

- This project assumes that you have already trained your model using NVIDIA TAO Toolkit and have an .etlt file. If not, please refer here for information on how to do this. The pre-trained PointPillars model used by this project can be found here.

- Use tao-converter to generate a TensorRT engine from your model. For instance:

tao-converter -k $KEY \

-e $USER_DIR/trt.engine \

-p points,1x204800x4,1x204800x4,1x204800x4 \

-p num_points,1,1,1 \

-t fp16 \

$USER_DIR/model.etlt

Argument definitions:

- -k: User-specific encoding key to save or load an etlt model.

- -e: Location where you want to store the resulting TensorRT engine.

- -p points: (N x P x 4), where N is the batch size, P is the maximum number of points in a point cloud file, 4 is the number of features per point.

- -p num_points: (N,), where N is the batch size as above.

- -t: Desired engine data type. The options are fp32 or fp16 (default value is fp32).

- Source your ROS2 environment:

source /opt/ros/foxy/setup.bash - Create a ROS2 workspace (more information can be found here):

mkdir -p pointpillars_ws/src

cd pointpillars_ws/src

Clone this repository in pointpillars_ws/src. The directory structure should look like this:

.

+- pointpillars_ws

+- src

+- CMakeLists.txt

+- package.xml

+- include

+- launch

+- src

- Resolve missing dependencies by running the following command from

pointpillars_ws:

rosdep install -i --from-path src --rosdistro foxy -y

-

Specify parameters including the path to your TensorRT engine in the launch file. Please see Modifying parameters in the launch file below for how to do this.

-

Build and source the package files:

colcon build --packages-select pp_infer

. install/setup.bash

- Run the node using the launch file:

ros2 launch pp_infer pp_infer_launch.py - Make sure data is being published on the /point_cloud topic. If your point cloud data is being published on a different topic name, you can remap it to /point_cloud (please see Modifying parameters in the launch file below). For good performance, point cloud input data should be from the same lidar and configuration that was used for training the model.

- Inference results will be published on the /bbox topic as Detection3DArray messages. Each Detection3DArray message has the following information:

- header: The time stamp and frame id following this format.

- detections: List of detected objects with following information for each:

- class ID

- score

- X, Y and Z coordinates of object bounding box center

- length, width and height of bounding box

- yaw (orientation) of bounding box in 3D Euclidean space

The resulting bounding box coordinates follow the coordinate system below with origin at the center of lidar:

CONTRIBUTING

Repository Summary

| Description | |

| Checkout URI | https://github.com/nvidia-ai-iot/ros2_tao_pointpillars.git |

| VCS Type | git |

| VCS Version | main |

| Last Updated | 2022-08-09 |

| Dev Status | UNKNOWN |

| Released | UNRELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| pp_infer | 0.0.0 |

README

ROS2 node for TAO-PointPillars

This is a ROS2 node for 3D object detection in point clouds using TAO-PointPillars for inference with TensorRT.

Node details:

- Input: Takes point cloud data in PointCloud2 format on the topic

/point_cloud. Each point in the data must contain 4 features - (x, y, z) position coordinates and intensity. ROS2 bags for testing the node, provided by Zvision, can be found here. - Output: Outputs inference results in Detection3DArray format on the topic

/bbox. This contains the class ID, score and 3D bounding box information of detected objects. - Inference model: You can train a model on your own dataset using NVIDIA TAO Toolkit following instructions here. We used a TensorRT engine generated from a TAO-PointPillars model trained to detect objects of 3 classes - Vehicle, Pedestrian and Cyclist.

Requirements

Tested on Ubuntu 20.04 and ROS2 Foxy.

- TensorRT 8.2(or above)

- TensorRT OSS 22.02 (see how to install below)

git clone -b 22.02 https://github.com/NVIDIA/TensorRT.git TensorRT

cd TensorRT

git submodule update --init --recursive

mkdir -p build && cd build

cmake .. -DCUDA_VERSION=$CUDA_VERSION -DGPU_ARCHS=$GPU_ARCHS

make nvinfer_plugin -j$(nproc)

make nvinfer_plugin_static -j$(nproc)

cp libnvinfer_plugin.so.8.2.* /usr/lib/$ARCH-linux-gnu/libnvinfer_plugin.so.8.2.3

cp libnvinfer_plugin_static.a /usr/lib/$ARCH-linux-gnu/libnvinfer_plugin_static.a

Usage

- This project assumes that you have already trained your model using NVIDIA TAO Toolkit and have an .etlt file. If not, please refer here for information on how to do this. The pre-trained PointPillars model used by this project can be found here.

- Use tao-converter to generate a TensorRT engine from your model. For instance:

tao-converter -k $KEY \

-e $USER_DIR/trt.engine \

-p points,1x204800x4,1x204800x4,1x204800x4 \

-p num_points,1,1,1 \

-t fp16 \

$USER_DIR/model.etlt

Argument definitions:

- -k: User-specific encoding key to save or load an etlt model.

- -e: Location where you want to store the resulting TensorRT engine.

- -p points: (N x P x 4), where N is the batch size, P is the maximum number of points in a point cloud file, 4 is the number of features per point.

- -p num_points: (N,), where N is the batch size as above.

- -t: Desired engine data type. The options are fp32 or fp16 (default value is fp32).

- Source your ROS2 environment:

source /opt/ros/foxy/setup.bash - Create a ROS2 workspace (more information can be found here):

mkdir -p pointpillars_ws/src

cd pointpillars_ws/src

Clone this repository in pointpillars_ws/src. The directory structure should look like this:

.

+- pointpillars_ws

+- src

+- CMakeLists.txt

+- package.xml

+- include

+- launch

+- src

- Resolve missing dependencies by running the following command from

pointpillars_ws:

rosdep install -i --from-path src --rosdistro foxy -y

-

Specify parameters including the path to your TensorRT engine in the launch file. Please see Modifying parameters in the launch file below for how to do this.

-

Build and source the package files:

colcon build --packages-select pp_infer

. install/setup.bash

- Run the node using the launch file:

ros2 launch pp_infer pp_infer_launch.py - Make sure data is being published on the /point_cloud topic. If your point cloud data is being published on a different topic name, you can remap it to /point_cloud (please see Modifying parameters in the launch file below). For good performance, point cloud input data should be from the same lidar and configuration that was used for training the model.

- Inference results will be published on the /bbox topic as Detection3DArray messages. Each Detection3DArray message has the following information:

- header: The time stamp and frame id following this format.

- detections: List of detected objects with following information for each:

- class ID

- score

- X, Y and Z coordinates of object bounding box center

- length, width and height of bounding box

- yaw (orientation) of bounding box in 3D Euclidean space

The resulting bounding box coordinates follow the coordinate system below with origin at the center of lidar:

CONTRIBUTING

Repository Summary

| Description | |

| Checkout URI | https://github.com/nvidia-ai-iot/ros2_tao_pointpillars.git |

| VCS Type | git |

| VCS Version | main |

| Last Updated | 2022-08-09 |

| Dev Status | UNKNOWN |

| Released | UNRELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| pp_infer | 0.0.0 |

README

ROS2 node for TAO-PointPillars

This is a ROS2 node for 3D object detection in point clouds using TAO-PointPillars for inference with TensorRT.

Node details:

- Input: Takes point cloud data in PointCloud2 format on the topic

/point_cloud. Each point in the data must contain 4 features - (x, y, z) position coordinates and intensity. ROS2 bags for testing the node, provided by Zvision, can be found here. - Output: Outputs inference results in Detection3DArray format on the topic

/bbox. This contains the class ID, score and 3D bounding box information of detected objects. - Inference model: You can train a model on your own dataset using NVIDIA TAO Toolkit following instructions here. We used a TensorRT engine generated from a TAO-PointPillars model trained to detect objects of 3 classes - Vehicle, Pedestrian and Cyclist.

Requirements

Tested on Ubuntu 20.04 and ROS2 Foxy.

- TensorRT 8.2(or above)

- TensorRT OSS 22.02 (see how to install below)

git clone -b 22.02 https://github.com/NVIDIA/TensorRT.git TensorRT

cd TensorRT

git submodule update --init --recursive

mkdir -p build && cd build

cmake .. -DCUDA_VERSION=$CUDA_VERSION -DGPU_ARCHS=$GPU_ARCHS

make nvinfer_plugin -j$(nproc)

make nvinfer_plugin_static -j$(nproc)

cp libnvinfer_plugin.so.8.2.* /usr/lib/$ARCH-linux-gnu/libnvinfer_plugin.so.8.2.3

cp libnvinfer_plugin_static.a /usr/lib/$ARCH-linux-gnu/libnvinfer_plugin_static.a

Usage

- This project assumes that you have already trained your model using NVIDIA TAO Toolkit and have an .etlt file. If not, please refer here for information on how to do this. The pre-trained PointPillars model used by this project can be found here.

- Use tao-converter to generate a TensorRT engine from your model. For instance:

tao-converter -k $KEY \

-e $USER_DIR/trt.engine \

-p points,1x204800x4,1x204800x4,1x204800x4 \

-p num_points,1,1,1 \

-t fp16 \

$USER_DIR/model.etlt

Argument definitions:

- -k: User-specific encoding key to save or load an etlt model.

- -e: Location where you want to store the resulting TensorRT engine.

- -p points: (N x P x 4), where N is the batch size, P is the maximum number of points in a point cloud file, 4 is the number of features per point.

- -p num_points: (N,), where N is the batch size as above.

- -t: Desired engine data type. The options are fp32 or fp16 (default value is fp32).

- Source your ROS2 environment:

source /opt/ros/foxy/setup.bash - Create a ROS2 workspace (more information can be found here):

mkdir -p pointpillars_ws/src

cd pointpillars_ws/src

Clone this repository in pointpillars_ws/src. The directory structure should look like this:

.

+- pointpillars_ws

+- src

+- CMakeLists.txt

+- package.xml

+- include

+- launch

+- src

- Resolve missing dependencies by running the following command from

pointpillars_ws:

rosdep install -i --from-path src --rosdistro foxy -y

-

Specify parameters including the path to your TensorRT engine in the launch file. Please see Modifying parameters in the launch file below for how to do this.

-

Build and source the package files:

colcon build --packages-select pp_infer

. install/setup.bash

- Run the node using the launch file:

ros2 launch pp_infer pp_infer_launch.py - Make sure data is being published on the /point_cloud topic. If your point cloud data is being published on a different topic name, you can remap it to /point_cloud (please see Modifying parameters in the launch file below). For good performance, point cloud input data should be from the same lidar and configuration that was used for training the model.

- Inference results will be published on the /bbox topic as Detection3DArray messages. Each Detection3DArray message has the following information:

- header: The time stamp and frame id following this format.

- detections: List of detected objects with following information for each:

- class ID

- score

- X, Y and Z coordinates of object bounding box center

- length, width and height of bounding box

- yaw (orientation) of bounding box in 3D Euclidean space

The resulting bounding box coordinates follow the coordinate system below with origin at the center of lidar:

CONTRIBUTING

Repository Summary

| Description | |

| Checkout URI | https://github.com/nvidia-ai-iot/ros2_tao_pointpillars.git |

| VCS Type | git |

| VCS Version | main |

| Last Updated | 2022-08-09 |

| Dev Status | UNKNOWN |

| Released | UNRELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| pp_infer | 0.0.0 |

README

ROS2 node for TAO-PointPillars

This is a ROS2 node for 3D object detection in point clouds using TAO-PointPillars for inference with TensorRT.

Node details:

- Input: Takes point cloud data in PointCloud2 format on the topic

/point_cloud. Each point in the data must contain 4 features - (x, y, z) position coordinates and intensity. ROS2 bags for testing the node, provided by Zvision, can be found here. - Output: Outputs inference results in Detection3DArray format on the topic

/bbox. This contains the class ID, score and 3D bounding box information of detected objects. - Inference model: You can train a model on your own dataset using NVIDIA TAO Toolkit following instructions here. We used a TensorRT engine generated from a TAO-PointPillars model trained to detect objects of 3 classes - Vehicle, Pedestrian and Cyclist.

Requirements

Tested on Ubuntu 20.04 and ROS2 Foxy.

- TensorRT 8.2(or above)

- TensorRT OSS 22.02 (see how to install below)

git clone -b 22.02 https://github.com/NVIDIA/TensorRT.git TensorRT

cd TensorRT

git submodule update --init --recursive

mkdir -p build && cd build

cmake .. -DCUDA_VERSION=$CUDA_VERSION -DGPU_ARCHS=$GPU_ARCHS

make nvinfer_plugin -j$(nproc)

make nvinfer_plugin_static -j$(nproc)

cp libnvinfer_plugin.so.8.2.* /usr/lib/$ARCH-linux-gnu/libnvinfer_plugin.so.8.2.3

cp libnvinfer_plugin_static.a /usr/lib/$ARCH-linux-gnu/libnvinfer_plugin_static.a

Usage

- This project assumes that you have already trained your model using NVIDIA TAO Toolkit and have an .etlt file. If not, please refer here for information on how to do this. The pre-trained PointPillars model used by this project can be found here.

- Use tao-converter to generate a TensorRT engine from your model. For instance:

tao-converter -k $KEY \

-e $USER_DIR/trt.engine \

-p points,1x204800x4,1x204800x4,1x204800x4 \

-p num_points,1,1,1 \

-t fp16 \

$USER_DIR/model.etlt

Argument definitions:

- -k: User-specific encoding key to save or load an etlt model.

- -e: Location where you want to store the resulting TensorRT engine.

- -p points: (N x P x 4), where N is the batch size, P is the maximum number of points in a point cloud file, 4 is the number of features per point.

- -p num_points: (N,), where N is the batch size as above.

- -t: Desired engine data type. The options are fp32 or fp16 (default value is fp32).

- Source your ROS2 environment:

source /opt/ros/foxy/setup.bash - Create a ROS2 workspace (more information can be found here):

mkdir -p pointpillars_ws/src

cd pointpillars_ws/src

Clone this repository in pointpillars_ws/src. The directory structure should look like this:

.

+- pointpillars_ws

+- src

+- CMakeLists.txt

+- package.xml

+- include

+- launch

+- src

- Resolve missing dependencies by running the following command from

pointpillars_ws:

rosdep install -i --from-path src --rosdistro foxy -y

-

Specify parameters including the path to your TensorRT engine in the launch file. Please see Modifying parameters in the launch file below for how to do this.

-

Build and source the package files:

colcon build --packages-select pp_infer

. install/setup.bash

- Run the node using the launch file:

ros2 launch pp_infer pp_infer_launch.py - Make sure data is being published on the /point_cloud topic. If your point cloud data is being published on a different topic name, you can remap it to /point_cloud (please see Modifying parameters in the launch file below). For good performance, point cloud input data should be from the same lidar and configuration that was used for training the model.

- Inference results will be published on the /bbox topic as Detection3DArray messages. Each Detection3DArray message has the following information:

- header: The time stamp and frame id following this format.

- detections: List of detected objects with following information for each:

- class ID

- score

- X, Y and Z coordinates of object bounding box center

- length, width and height of bounding box

- yaw (orientation) of bounding box in 3D Euclidean space

The resulting bounding box coordinates follow the coordinate system below with origin at the center of lidar:

CONTRIBUTING

Repository Summary

| Description | |

| Checkout URI | https://github.com/nvidia-ai-iot/ros2_tao_pointpillars.git |

| VCS Type | git |

| VCS Version | main |

| Last Updated | 2022-08-09 |

| Dev Status | UNKNOWN |

| Released | UNRELEASED |

| Contributing |

Help Wanted (-)

Good First Issues (-) Pull Requests to Review (-) |

Packages

| Name | Version |

|---|---|

| pp_infer | 0.0.0 |

README

ROS2 node for TAO-PointPillars

This is a ROS2 node for 3D object detection in point clouds using TAO-PointPillars for inference with TensorRT.

Node details:

- Input: Takes point cloud data in PointCloud2 format on the topic

/point_cloud. Each point in the data must contain 4 features - (x, y, z) position coordinates and intensity. ROS2 bags for testing the node, provided by Zvision, can be found here. - Output: Outputs inference results in Detection3DArray format on the topic

/bbox. This contains the class ID, score and 3D bounding box information of detected objects. - Inference model: You can train a model on your own dataset using NVIDIA TAO Toolkit following instructions here. We used a TensorRT engine generated from a TAO-PointPillars model trained to detect objects of 3 classes - Vehicle, Pedestrian and Cyclist.

Requirements

Tested on Ubuntu 20.04 and ROS2 Foxy.

- TensorRT 8.2(or above)

- TensorRT OSS 22.02 (see how to install below)

git clone -b 22.02 https://github.com/NVIDIA/TensorRT.git TensorRT

cd TensorRT

git submodule update --init --recursive

mkdir -p build && cd build

cmake .. -DCUDA_VERSION=$CUDA_VERSION -DGPU_ARCHS=$GPU_ARCHS

make nvinfer_plugin -j$(nproc)

make nvinfer_plugin_static -j$(nproc)

cp libnvinfer_plugin.so.8.2.* /usr/lib/$ARCH-linux-gnu/libnvinfer_plugin.so.8.2.3

cp libnvinfer_plugin_static.a /usr/lib/$ARCH-linux-gnu/libnvinfer_plugin_static.a

Usage

- This project assumes that you have already trained your model using NVIDIA TAO Toolkit and have an .etlt file. If not, please refer here for information on how to do this. The pre-trained PointPillars model used by this project can be found here.

- Use tao-converter to generate a TensorRT engine from your model. For instance:

tao-converter -k $KEY \

-e $USER_DIR/trt.engine \

-p points,1x204800x4,1x204800x4,1x204800x4 \

-p num_points,1,1,1 \

-t fp16 \

$USER_DIR/model.etlt

Argument definitions:

- -k: User-specific encoding key to save or load an etlt model.

- -e: Location where you want to store the resulting TensorRT engine.

- -p points: (N x P x 4), where N is the batch size, P is the maximum number of points in a point cloud file, 4 is the number of features per point.

- -p num_points: (N,), where N is the batch size as above.

- -t: Desired engine data type. The options are fp32 or fp16 (default value is fp32).

- Source your ROS2 environment:

source /opt/ros/foxy/setup.bash - Create a ROS2 workspace (more information can be found here):

mkdir -p pointpillars_ws/src

cd pointpillars_ws/src

Clone this repository in pointpillars_ws/src. The directory structure should look like this:

.

+- pointpillars_ws

+- src

+- CMakeLists.txt

+- package.xml

+- include

+- launch

+- src

- Resolve missing dependencies by running the following command from

pointpillars_ws:

rosdep install -i --from-path src --rosdistro foxy -y

-

Specify parameters including the path to your TensorRT engine in the launch file. Please see Modifying parameters in the launch file below for how to do this.

-

Build and source the package files:

colcon build --packages-select pp_infer

. install/setup.bash

- Run the node using the launch file:

ros2 launch pp_infer pp_infer_launch.py - Make sure data is being published on the /point_cloud topic. If your point cloud data is being published on a different topic name, you can remap it to /point_cloud (please see Modifying parameters in the launch file below). For good performance, point cloud input data should be from the same lidar and configuration that was used for training the model.

- Inference results will be published on the /bbox topic as Detection3DArray messages. Each Detection3DArray message has the following information:

- header: The time stamp and frame id following this format.

- detections: List of detected objects with following information for each:

- class ID

- score

- X, Y and Z coordinates of object bounding box center

- length, width and height of bounding box

- yaw (orientation) of bounding box in 3D Euclidean space

The resulting bounding box coordinates follow the coordinate system below with origin at the center of lidar: